Jelenlegi hely

Estimation of Linear Shape Deformations and its Medical Applications

Electrical and Computer Engineering, Ben-Gurion University of the Negev, Israel (Joseph M. Francos)

Faculty of Engineering, University of Novi Sad, Serbia (Natasa Sladoje)

Centre for Image Analysis, Swedish University of Agricultural Sciences, Uppsala, Sweden (Joakim Lindblad)

Department of Radiology, University of Szeged (Endre Szabó, András Palkó)

Registration is a crucial step in almost all image processing tasks where images of different views or sensors of an object need to be compared or combined. Typical application areas include visual inspection, target tracking, super resolution, shape modeling, object recognition, or medical image analysis. In a general setting, one is looking for a transformation which aligns two images such that one image (template) becomes similar to the second one (observation). Due to the large number of possible transformations, there is a huge variability of the object signature. Hence the problem is inherently ill-defined unless this variability is taken into account.

The classical way to solve this registration problem is to find correspondences between the two images and then determine the transformation parameters from these landmarks usually utilizing an iterative optimization procedure. These algorithms basically fall into two main categories. Feature-based methods aim at establishing point correspondences between the two images. For that purpose, they extract some easily detectable landmarks (e.g., contours, intersection of lines, corners, etc.) from the images and then use these landmarks to find the best match based on a similarity metric. Area-based methods on the other hand treat the problem without attempting to detect salient objects. These methods are sometimes called correlation-like methods because they use a rectangular window to gain some preliminary information about the distortion. They search the position in the observation where the matching of the two windows is the best and then looks for sufficient alignment between the windows in the template and in the observation.

The parametric estimation of two-dimensional affine transformations between two gray-level images has been addressed by Hagege and Francos. Their method provides an accurate and computationally simple solution avoiding both the correspondence problem as well as the need for optimization. The basic idea is to reformulate the original problem as an equivalent linear parameter estimation one which can be easily solved. Their solution, however, makes use of the radiometric information which is not available in binary images.

We proposed an extension of this idea to the binary case. The fundamental difference compared to classical image registration algorithms is that our model works without any landmark extraction, correspondence, or iterative optimization. It makes use of all the information available in the input images and constructs a linear or polynomial system of equations which can be solved exactly. The complexity of the algorithm is linear hence it is potentially capable of registering images at near real-time speed. We proposed several methods to find the distortion between the images.

I. Solution by constructing a polynomial system of equations

II. Solution by constructing linear system of equations

I. Solution by constructing a polynomial system of equations

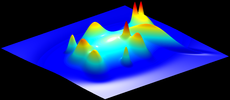

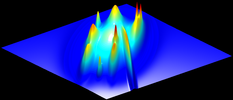

Our first approach applies nonlinear functions to the coordinates of the object points and thus a polynomial system of equations is constructed. The transformation parameters of the unknown affine transformation are provided by the solution of this nonlinear (polynomial) system of equations. From a geometric point of view, the idea of this approach is to match the center of masses of the original and the nonlinearly transformed pairs of template and observation images. Note that this approach can be extended to any diffeomorphic transformation, which can be considered as a framework to solve this problem. The following figure show the effect of two such nonlinear transformations.

|

|

|

|

Original binary image |

omega(X) = [x^2, y^2] |

omega(X) = [x^3, y^3] |

Both in 2D and 3D we examined two different types of solution methods: iterative least-squares solutions and direct analytical solutions.

- In case of a direct method, limited number of equations can be used (according to the degree of freedom of the n-dimensional affine transformation), while an iterative approach allows for an overdetermined system, which may give more stability.

- Direct methods may provide many hundreds or even thousands of possible solutions, many (or even all) of them may be complex thus a solution selection scheme has to be used to produce only one real solution from these. Iterative methods can provide only one real solution, but the search may fall into local minima. To avoid such local minima, usually a sophisticated search strategy is necessary.

- Direct methods can provide full affine solutions only, in case of iterative methods restrictions to lower degree of freedom transformations are easy to impose.

We found that the direct approach gives more stable results, but the iterative one is more precise. It is also possible to combine the two approaches: The direct approach provides the initialization of the iterative one.

I.1. Binary case

In our first method we make use of the characteristic function of the objects in order to construct the system of nonlinear equations. Note that, the system of equations is constructed based on the integrals of coordinates on limited precision digital images (containing discretization errors). Hence, we get better results, when we could use more precise object descriptors (please refer to I.2. Fuzzy extension). We proposed solutions for 2D and 3D registration problems.

Registration results on 2D synthetic images

The proposed algorithm has been tested on a large database of binary images of size 1000×1000. The dataset consists of 56 different shapes and their transformed versions, a total of ? 50 000 images. Furthermore, we reviewed some of the most relevant binary registration approaches and, where an implementation was available, evaluated quantitatively (on 1686 images) the performance of our algorithm with respect to these methods. Some of these results are presented in the following figure.

| Template shapes |

Observations | Heikkilä [1] | Shape Context [2] |

Kannala et al. [3] |

Suk & Flusser [4] |

Proposed |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| Registration results on synthetic image pairs. The first two columns show the template and its affine distorted observation to be matched while the other columns contain the registration result of each considered method. The template and its registered observation are overlayed such that overlapping pixels are depicted in black while non-overlapping ones are shown in light or dark gray, respectively. | ||||||

- Heikkilä, J.: Pattern matching with affine moment descriptors. Pattern Recognition, vol. 37, pp.1825-1834 (2004)

- Belongie, S., Malik, J. and Puzicha, J.: Shape matching and object recognition using shape contexts. In IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 24, pp. 509-522 (2002)

- Kannala, J., Rahtu, E., Heikkila, J. and Salo, M.: A new method for affine registration of images and point sets. In Kalviainen, H., Parkkinen, J. and Kaarna, A. (eds.): Proceedings of Scandinavian Conference on Image Analisys. Volume 3540 of Lecture Notes in Computer Science, pp. 224–234, 2005, Springer

- Suk, T. and Flusser, J.: Affine Normalization of Symmetric Objects. In Blanc-Talon, J.; Philips, W.; Popescu, D. and Scheunders, P. (eds.): Proceedings of International Conference on Advanced Concepts for Intelligent Vision Systems. Volume 3708 of Lecture Notes in Computer Science, pp. 100-107, 2005, Springer

Registration results on 3D synthetic images

We compared the performance of our proposed method with two approaches inspired by well known registration methods from the literature based on mutual information and Chamfer matching [1,2]. To speed up the search in the competing methods, as well as to make them more robust, we used a hierarchical approach based on a Gaussian scale space pyramid with 3 levels, where the best candidate at the coarser level is propagated to the finer levels. Since the capture ranges of the objective functions are known to be limited, we initiated the search from 27 different orientations at the coarsest pyramid level.

We used the 375 rigid-body cases from our 3D test database since we had only rigid version of the competing methods. Our proposed method clearly outperformed both MI and Chamfer. The proposed method gave excellent results for 99.5% of the cases and its overall maximal overlap error was 1.15%, whereas, despite starting from 27 orientations, both MI and Chamfer methods fail seriously in more than 26% of the cases. Furthermore, the computing time requirements of the competing methods were about two orders of magnitude greater than ours.

|

|

|

|

| Samples from our 3D database. | |||

- Studholme, C, Hill, D.L.G, and Hawkes, D.J. An overlap invariant entropy measure of 3D medical image alignment. Pattern Recognition, vol. 32, no. 1, pp. 71–86, 1999.

- Borgefors, G. Hierarchical Chamfer matching: a parametric edge matching algorithm. Pattern Analysis and Machine Intelligence, IEEE Transactions on, vol. 10, no. 6, pp. 849–865, 1988.

Registration results on real images

a. Registration of objects segmented from real photographs

After taking the photographs, the objects were segmented. The segmented shapes were used for registration. The following figure shows some results. The template and the observation is segmented, the outline of the segmented objects are shown in blue over the grayscale image. The difference image generated after registration is shown in the third column on the following figure.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| For each image pair, the first two rows contain the template and observation with overlayed contours of the segmented silhouettes, while the third row shows the difference between the registered shapes | |||

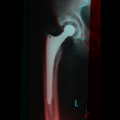

b. Medical application: Hip prosthesis registration

Hip replacement is a surgical procedure in which the hip joint is replaced by a prosthetic implant. Such joint replacement surgery generally is conducted to relieve arthritis pain or fix severe physical joint damage. In the short term post-operatively, infection is a major concern. Deep infection will often require one or two stage revision surgery. Recurrent dislocation is another indication for revision. In the long term, many problems relate to osteolysis from wear debris. An inflammatory process causes bone resorption and subsequent loosening or fracture often requiring revision surgery.

The following figure shows a result of our method. The red channel of the first image represents the template. By applying the inverse of the recovered transformation to the observation, we get a registered image shown in the green channel. The contour overlay image shows the contour of the registered observation object in yellow color over the template image.

|

|

|

|

|

|

|

|

|

|

| Registration of hip prosthesis X-ray images. Each image pair has been taken over a period of time about the same patient. The overlayed contour in the second row shows the aligned contour of the corresponding image in the first row. For each pair, we have also evaluated the measure. | ||||

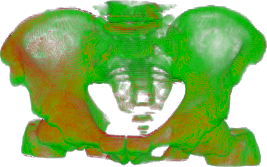

c. Medical application: 3D CT registration

We tested the 3D version of our method on objects extracted from real 3D medical data. The spatial resolution of the CT studies were 0.6–0.8 mm in-slice. The slice distance was 5 mm in 11 cases (cca. 10 million voxels), 1.25 mm in 4 cases (cca. 44 million voxels). We also got three segmented CT studies of the same person acquired by a PET-CT scanner. Their in-slice resolution was 0.9766 mm and the slice distance 3.27 mm (16 milliom voxels). Bone regions from the images were segmented by thresholding and semi-automatic removal of unwanted components (small islands and contrast material delineated inside regions of the colon).

The main challenges here were poor image resolution, substantial segmentation errors, and slightly different placement of the femoral head and lower portion of the spine. Due to the several months difference between the acquisitions, the images differed significantly. The intestine deformed non-linearly and the body contour was usually moved. Contrast material in different parts of the colon was also visible.

Our method provided slightly higher overlap errors than competing methods from the literature but took only a fraction of time required by other methods. The construction of the system of equations took less than half a second even for the largest images, the optimization around 0.01 second. The competing methods took at least two order of magnitude larger time to finish. This shows the clear advantage of our approach. Visual inspection revealed that even overlap errors about 20-25% can be regarded as good or acceptable solutions.

|

|

| Registration of pelvic CT data: superimposed registered 3D bone models (left), and bone contours of the registered template (yellow) overlayed on a CT slice of the observations (right). Overlap error is 14%. | |

|

|

| Registration of pelvic CT data: superimposed registered 3D bone models (left), and bone contours of the registered template (yellow) overlayed on a CT slice of the observations (right). Overlap error is 19%. | |

|

|

| Registration of pelvic CT data: superimposed registered 3D bone models (left), and bone contours of the registered template (yellow) overlayed on a CT slice of the observations (right). Overlap error is 28%. Even the error value is high, the result can be regarded as a fast, coarse approximation. | |

Demo programs

Here you can find a sample implementation and benchmark dataset of the 2D binary image registration algorithm.

Here you can find a sample implementation and benchmark dataset of the 3D binary image registration algorithm.

I.2. Fuzzy extension

We extended our method by investigating the case when the segmentation method is capable of producing fuzzy object descriptions instead of a binary result in both 2D and 3D. It has been shown that the information preserved by using fuzzy representation based on area coverage may be successfully utilized to improve precision and accuracy of several shape descriptors; geometric moments of a shape are among them.

|

|

| Binary and fuzzy representation of a 2D filled arc | |

The performance of the proposed algorithm has been tested and evaluated on a database of 2D synthetic images. The dataset consisted of 39 different shapes and their transformed versions, a total of 2000 images. The fuzzy border representations of the bservation images were generated by using 16×16 supersampling of the pixels close to the object edge and the pixel coverage was approximated by the fraction of subpixels that fall inside the object. The fuzzy membership values of the images were quantized and represented by integer values having k-bit (k = 1,...,8) representation. The result of the test showed that fuzzy representation yields smaller registration errors in average.

|

| The effect of the number of quantization levels of the fuzzy membership function to the precision of image registration, taking into account two different error measures. |

In real applications we need a segmentation method that provides fuzzy results. The moment estimation method presented in [1] assumes a discrete representation of a shape such that pixels are assigned their corresponding pixel coverage values. Even though many fuzzy segmentation methods exist in the literature, very few of them result in pixel coverage based object representations. With an intention to show the applicability of the approach, but to not focus on designing a completely new fuzzy segmentation method, we derived pixel coverage values from an Active Contour segmentation: We have modified the SnakeD plugin for ImageJ by Thomas Boudier. Active Contour segmentation provides a crisp parametric representation of the object contour from which it is fairly straightforward to compute pixel coverage values.

- Sladoje, N., Lindblad, J.: Estimation of moments of digitized objects with fuzzy borders. In Roli, F., Vitulano, S., eds.: Proceedings of International Conference on Image Analysis and Processing. Volume 3617 of Lecture Notes in Computer Science, pp. 188–195, September 2005, Cagliari, Italy, Springer

II. Solution by constructing linear system of equations

We also proposed linear solution to registrate affine transformation of binary images. When we can observe or construct such image features, that invariant under the transformations (e.g. gray-levels), called covariant function pair, then we can apply the idea of Hagege and Francos. Hence, the solution of a linear system of equations provides the parameters of the unknown transformation. The main challenge is how we can construct these covariant function pairs.

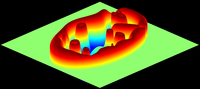

II.1. Using covariant Gaussian densities

In our second approach, in spite of the missing radiometric information, the exact transformation is obtained as a least-squares solution of a linear system. The basic idea is to generate a pair of covariant functions that are related by the unknown transformation. This can be achieved by fitting Gaussian density to the shapes which preserves the effect of the unknown transformation. It can also be regarded as a consistent coloring of the shapes yielding two rich functions defined over the two shapes to be matched.

|

|

||

| (a) | (b) | ||

|

|||

| Gaussian density function fitted over the binary shape yields a consistent coloring. (a) Original binary image; (b) 3D plot of the Gaussian density function over the binary shape; (c) Gaussian density as a grayscale image. | |||

Using the Mahalanobis-distance for defining the covariant functions.

|

|

| (a) | (b) |

| Mahalanobis-distance as covariant function. Level lines are overlayed on the original graylevel images for easier evaluation. (a) Mahalanobis-distance over the original image. (b) Mahalanobis-distance over the transformed image.The transformation was a 1.5× shearing along the x-axis. | |

Then we apply a set of nonlinear functions to create the linear system of equations. Theoretically any function could be applied for constructing the system of equations. In practice, however, the registration result depends on the set of these functions because of the inherent errors due to discretization. In our experiments, we found that the identic function with the trigonometric family gives good results.

|

|||

|

|

||

| sin(x) | sin(2x) | ||

|

|

||

| cos(x) | cos(2x) | ||

| The effect of the applied ωs. Level lines are overlayed on the original graylevel images for easier evaluation. | |||

Some results and comparison

We compared the results of our method with the method proposed by Kannala et. al. [1] and the shape context method proposed by Belongie et. al. [2]. Note that for the shape context method, the images were reduced in size to get the result in acceptable time.

| Template shapes |

|

|

|

|

|

| Observations |  |

|

|

|

|

| Proposed method |  |

|

|

|

|

| Kannala et. al. [1] |

|

|

|

|

|

| Shape Context [2] |

|

|

|

|

|

| Registration results provided by the proposed method and the method proposed by Kannala et. al. [1] and the shape context method proposed by Belongie et. al. [2]. The red lines show the transformed contour of the template images by the registered transformation over the green observations show the results. | |||||

- Kannala, J., Rahtu, E., Heikkila, J. and Salo, M.: A new method for affine registration of images and point sets. In Kalviainen, H., Parkkinen, J. and Kaarna, A. (eds.): Proceedings of Scandinavian Conference on Image Analisys. Volume 3540 of Lecture Notes in Computer Science, pp. 224–234, 2005, Springer

- Belongie, S., Malik, J. and Puzicha, J.: Shape matching and object recognition using shape contexts. In IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 24, pp. 509-522 (2002)

II.2. Affine alignment of compound objects

The previous method requires an almost perfect segmentation in order to achieve satisfactory alignment. Here we present an elegant, fast and robust solution, where linear equations are constructed by integrating nonlinear functions over corresponding domains derived from compound shapes.

The topology of the objects does not change under the action of the affine group. Thus, there exist a bijective mapping between the template and observation components under the transformation. As a consequence, we can construct covariant functions for each pair of shapes, and the overall shape. Thus we have m + 1 relations, where m is the number of the regions. The correspondence between components is usually not known, we will sum these relations yielding mixtures of unnormalized Gaussian densities which can also be interpreted as a consistent coloring of the template and observation respectively. As a result, we can transform the original binary images into graylevel ones, where corresponding pixels have exactly the same gray value.

|

|

|

|

||

|

|||||

| Gaussian PDFs fitted over a binary shape yields consistent coloring. First pair: template and observation compound object. Second pair: Consostent coloring of the compound objects. The white curves show the objects’ contour. Last pair: 3D plot of the covariant functions. | |||||

One of the most important question of the proposed method is how we can select a finite (intergration) domain which is less dependent from the segmentation error. Obvious approach (as it presented above) to restrict the integration in a finite domain is to use the segmented shape itself as the domain, however, the segmentation error will inherently result in erroneous integrals causing misalignment. Since the equidensity contours of a two variate Gaussian probability density function are ellipsoids centered at the mean, it is natural to chose one of the ellipses of the density fitted to the overall shape as the domain, because they are related by the unknown transformation. We may choose an ellipse according to the two sigma rule, which guarantees that about 95% of values are within the enclosed ellipsoid.

|

|

|

| r = 1 | r = 2 | r = 3 |

| 2σ rule guarantees that about 95% of values are within the enclosed ellipsoid | ||

Making use of the same idea as the pervious case we can construct a linear system of equations by applying a nonlinear set of functions. In our experiments, we found that the identic function with the trigonometric family gives good results.

|

|

||

| sin(x) | sin(2x) | ||

|

|

||

| cos(x) | cos(2x) | ||

| The effect of the applied ωs. Level lines are overlayed on the original graylevel images for easier evaluation. | |||

Robustness

In practice, segmentation never produces perfect shapes. Therefore we also evaluated the robustness of the proposed approach when 10%, 20%,..., 90% of the foreground pixels has been removed from the observation before registration. Our method provides good results up to as high as 50% removed pixels, and results for 90% are also acceptable. In general, our method will perform well as long as the first and second order statistics of shapes does not change dramatically.

|

||||||||||||||||||

Real images: traffic sign matching

Automatic traffic sign recognition usually requires the registration of a reference shape to an observed one. There are many methods to automatically detect and segment signs, but in our experiments we simply performed this task manually via thresholding. The results illustrate that the proposed method provides good results under real-life conditions.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| The registered contour of the images in the first and third row are overlayed on the observations in the second and fourth row, respectively. | |||